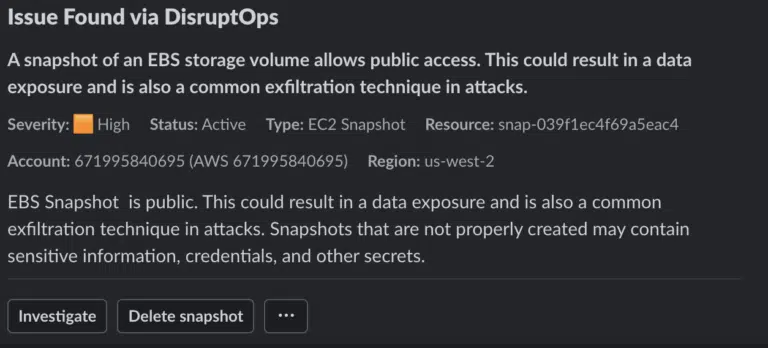

It’s Thursday afternoon and you’re getting ready to leave work a little early because… you can. But then that pesky Deliverer of Notifications (also known as Slack) pops off a new message in your security alerts channel:

Misconfigurations Have Three States of Being

This is something I’ve starting calling Schröedinger’s Misconfigurations since I have a bad habit of using principles of quantum mechanics to explain information security. I’d be stunned if you didn’t already know about Schröedinger’s Cat, the famous thought experiment that Erwin Shröedinger used to illustrate the paradox of quantum superposition to Albert Einstein. The really short version is that if you stick a cat in a box with some poison triggered by radioactive decay, the cat is neither alive nor dead, and is thus in a state of being alive AND dead, until you open the box and check.

Yes, that’s absurd, which was the point. Especially to those of us with cats who DO NOT LIKE BEING TRAPPED IN BOXES. Although I could do an entire blog series about cats crawling into boxes on their own but getting very angry if you put them in boxes and… I digress.

Back to cloud security. The fundamental concept behind the thought experiment is that something exists in multiple simultaneous states until you observe it and that act of observation forces an answer. I am, of course, skewing and simplifying to meet my own needs, so those of you with physics backgrounds please don’t send me angry emails.

The cloud version of this concept is that any given misconfiguration exists in a state of being an attack, a mistake, or a policy violation until you investigate and determine the cause.

There are 5 characteristics of cloud that support this concept:

- Cloud/developer teams tend to have more autonomy to directly manage their own cloud infrastructure.

- The cloud management plane is accessible via the Internet.

- The most common source (today) of cloud attacks is stolen credentials.

- Many misconfigurations create states that are identical to the actions of an attacker (e.g. making a snapshot public).

- It’s easy to accidentally create a misconfiguration, and sometimes they are on purpose to meet a need but the person taking the action doesn’t realize it is a security issue.

This concept holds true even in traditional infrastructure, albeit to a far lesser degree since teams have less autonomy. A developer on an app doesn’t typically have the ability to directly modify firewall rules and route tables. In cloud, that’s pretty common, at least for some environments.

Assume Attack Until Proven Otherwise

One of the more important principles of incident response in cloud is that you absolutely must treat misconfigurations as security events, and you have to assume they are attacks until proven otherwise.

This is a change in thinking since security is used to thinking in terms of vulnerabilities and attack surface, but we view those as things we scan for on a periodic basis and, largely treat as issues to remediate. I’m suggesting that in cloud computing we promote detected misconfigurations to the same level as an IDS or EDR alert. They aren’t merely compliance issues they are potential indicators of compromise.

And no, this doesn’t apply to every misconfiguration in every environment. We have to filter and prioritize. Better yet, we have to communicate, because usually the easiest way to figure out if a misconfiguration is a malicious attack is to just ask the person who made the change if they meant to do that.

Since I have to distill things down for training classes, I’ve come up with three primary feeds for security telemetry:

- Logs

- Cloud provider events (e.g. Security Hub events)

- Cloud misconfigurations, which can come from your CSPM tool, OSS scanners, or similar

Most people working in cloud security have already internalized this concept, but we don’t always explain it. If you look at some Cloud Detection and Response (CDR) tools they generate alerts on some misconfigurations. This is different than the default CSPM tool modality that creates findings in reports and on dashboards. Those are important for compliance and general security hygiene, but since attackers do nasty things like share disk images to other accounts or backdoor access to IAM roles, a subset of misconfigurations really need to be treated as if they are indicators of compromise until proven otherwise.

Internally (and in the DisruptOps platform) we handle this with a set of real-time threat detectors that trigger assessments based on identified API calls. It takes about 15-30 seconds to identify a misconfiguration and send it to security and the project owner via Slack (or Teams) like you see above. These alerts are treated the same as a GuardDuty finding or any other Indicator of Compromise, but using ChatOps to validate activities also helps us triage these really quickly without having to perform a deep analysis every time.

The tl;dr recommendation: treat key cloud misconfigurations in near-real-time and treat them as indicators of compromise until proven otherwise.

No cats were harmed in the drafting of this post.