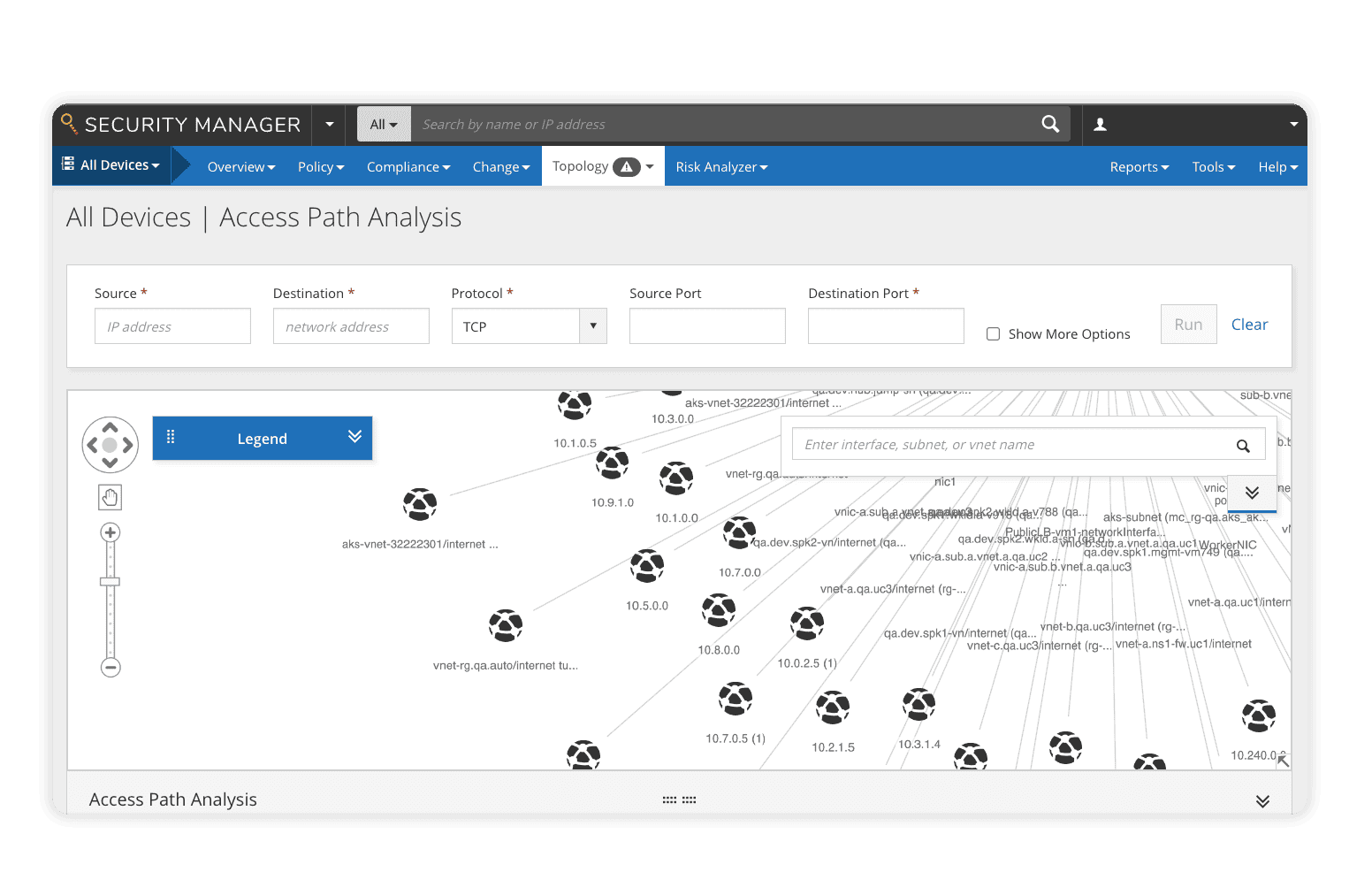

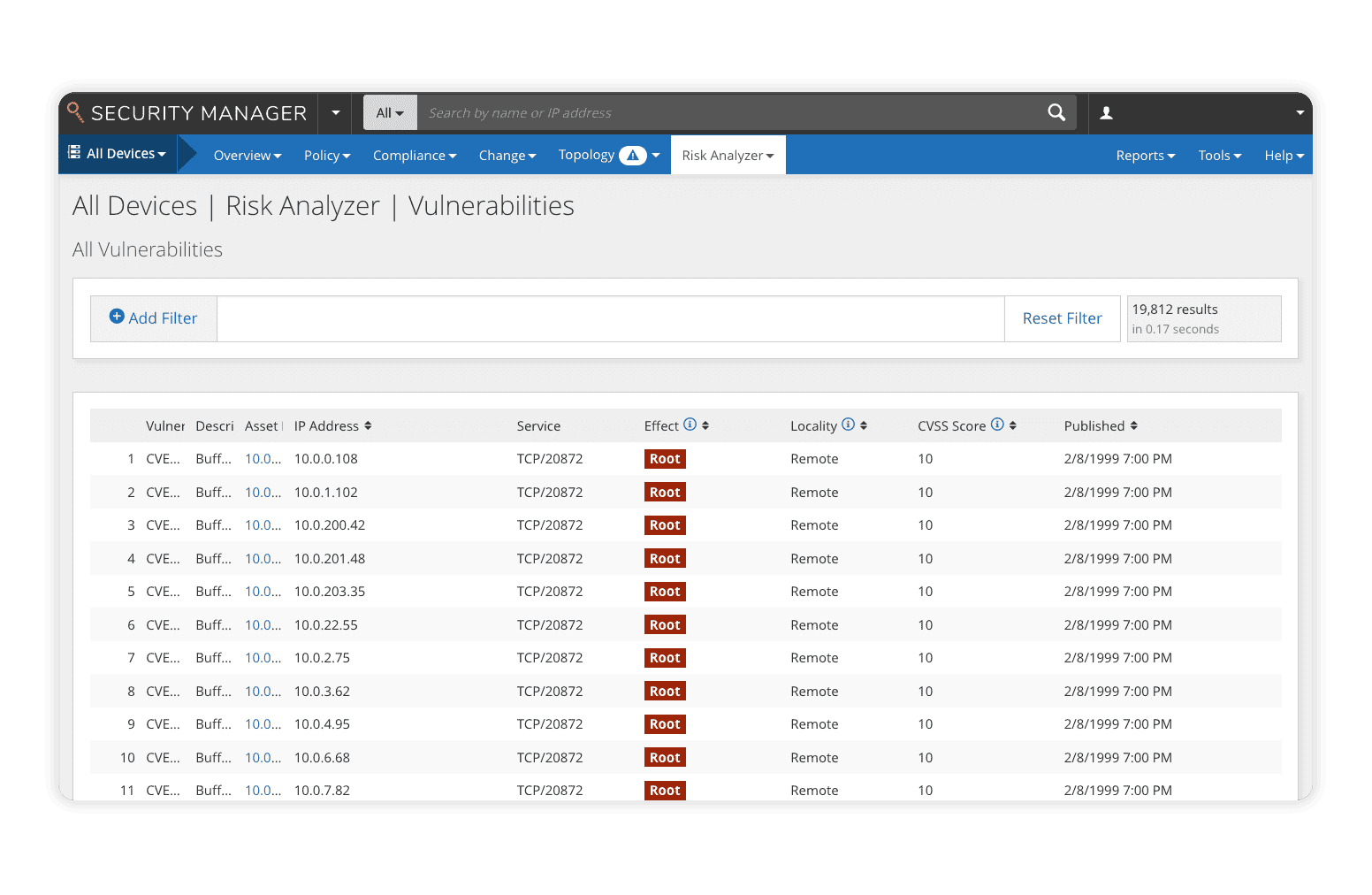

The Risk Analyzer module for Policy Manager delivers advanced vulnerability management by correlating third-party vulnerability data with network policy to identify network risk and potential attack paths. With real-time visibility into risk posture, it simulates attacks, calculates attack vectors, predicts potential impact, and presents findings in an intuitive dashboard. Scenario testing also helps security teams prioritize patching by simulating deployments and measuring their effect on overall network risk.

Risk Analyzer Delivers:

- Consolidated policy risk assessment and reporting with custom and best practices reports

- Risk and threat modeling including attack simulations, change risk simulations, and leak-path detection

- Pre-flight risk checks by automatically scanning for risk prior to change deployment

- Real-time risk detection and response through violation detection, alerts, and mitigation strategy